Extended Intelligences

EXTENDED INTELLIGENCES

For this course we were asked to generate a concept that would include the use of AI and particularly of AI agents, prefereably using them as a mean of communication between humans and technology.

We wanted to develop a music related concept: our first idea was to create an object that would allow the user to jam just with the fingers in order to explore the musical world, get familiar with it and get real-time feedback from AI on what mistakes are being made, how to improve, where to apply changes and so on. We quickly realize that all of this was basically impossible both for time constraint (the project was just 2 days long) and technology limitations ( the ai agents are still pretty slow and responsing in real time to the users movement is a demanding a super quick reaction to what’s being seen from the AI). Nonetheless we still wanted to continue pursuing the musical jamming idea, trying to simplify it, but keeping the main concept.

The redimensioned idea was now to have the user do a rythm through a sequences of touches made on a touch sensors, the AI agent would then detect the touch pattern and from that suggest a song with a similar rythm structure, giving a youtube link to the user.

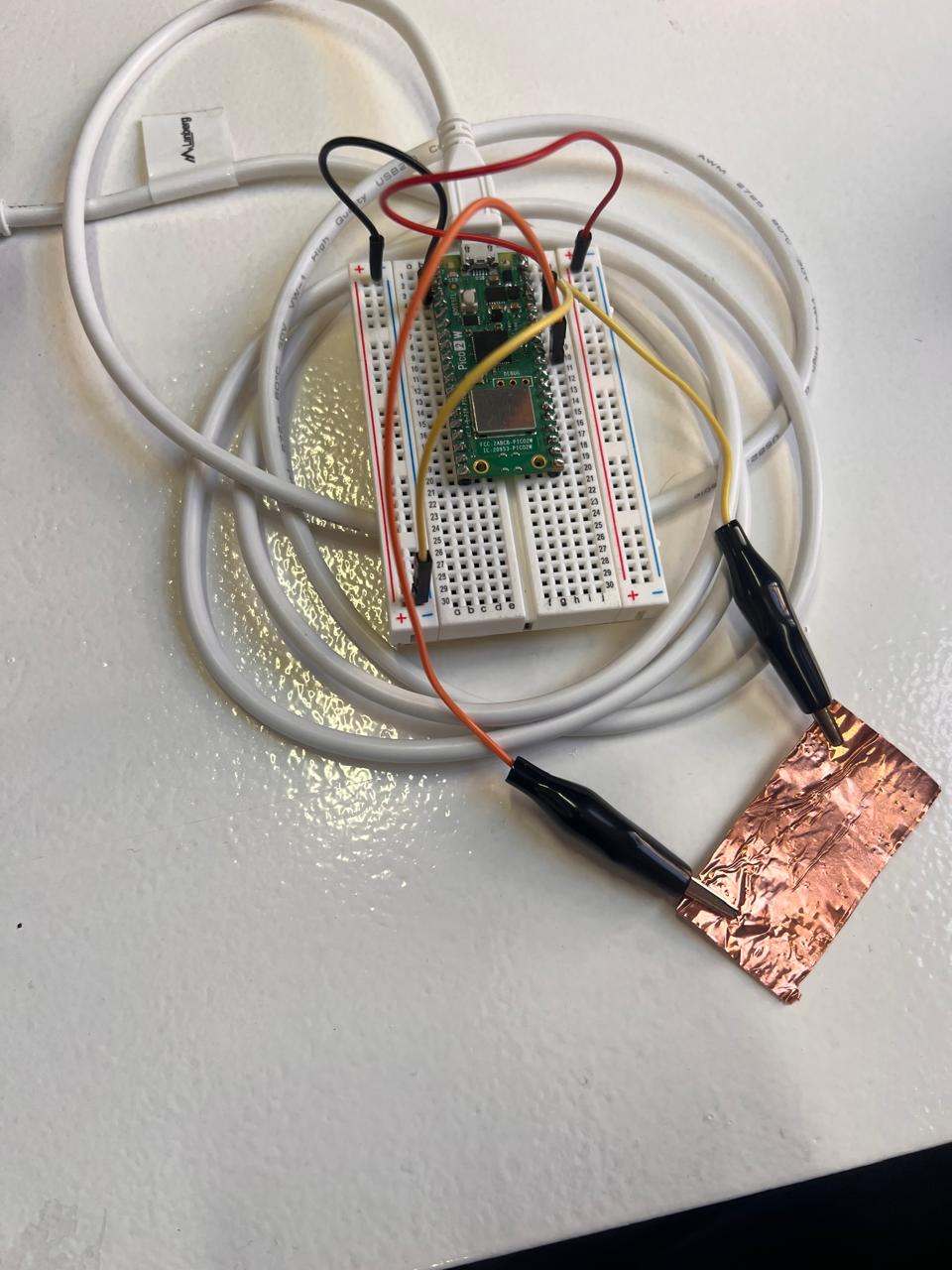

Elements Used: Raspberry Pi, PC with arduino, self made touch sensor, breadboard, AI agents

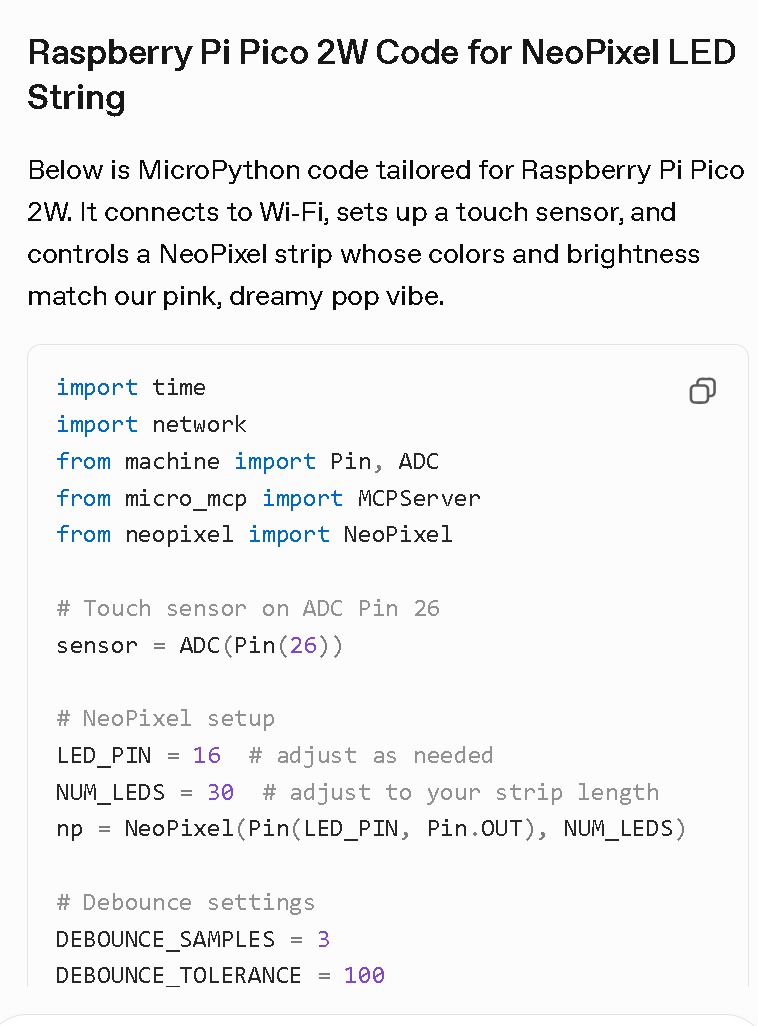

As a first thing, we needed to connect a touch sensor to the raspberry pi board to then be able to communicate the touch data to the agents; as we didn’t have on at our disposal, we obtained one through connecting a thin little sheet of copper to the breadboard, that with its conductivity would have worked as a touch sensor.

We then tried to proceed coding in order to be sure that given the touch input the agent would do what asked but here the problems started.

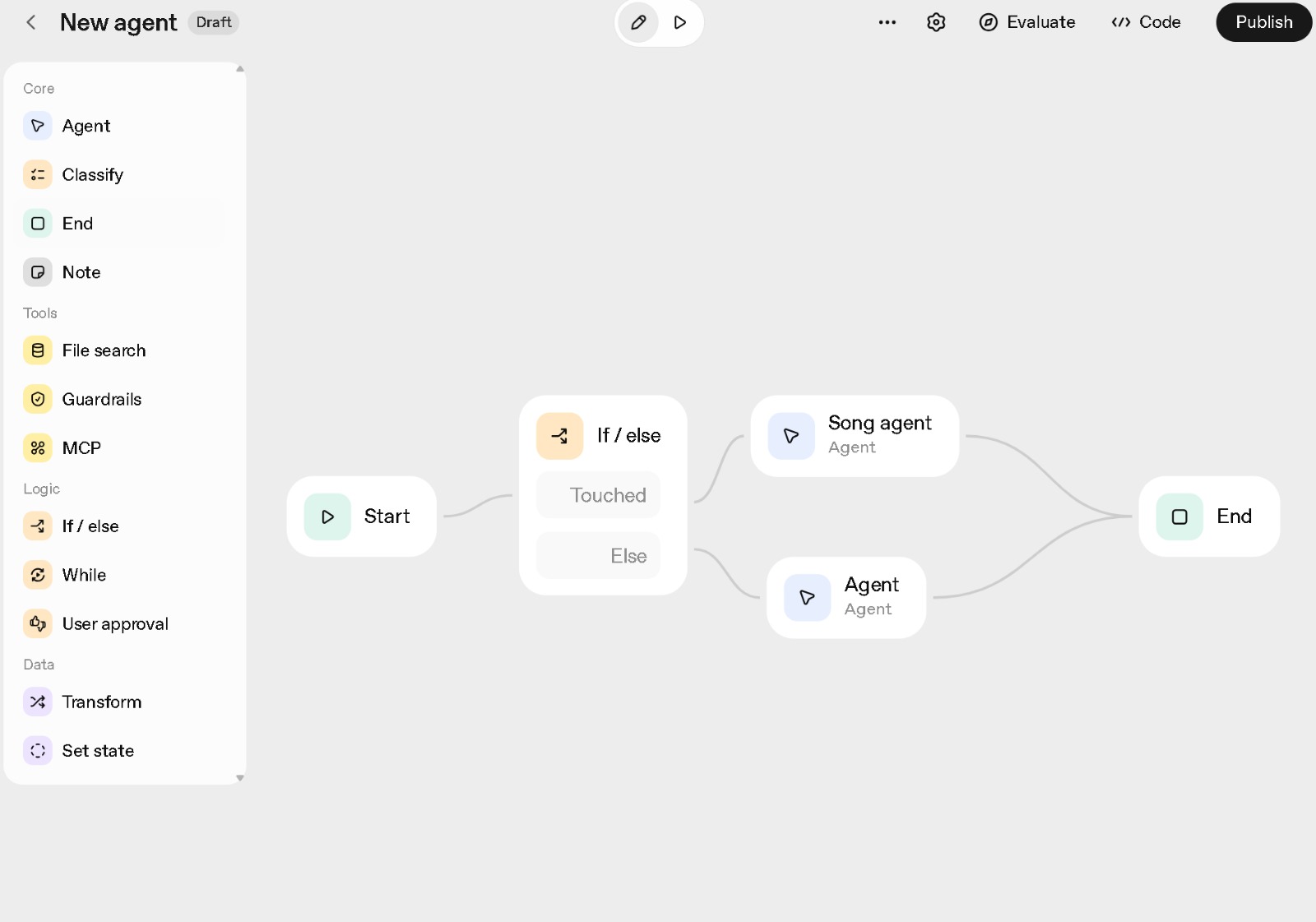

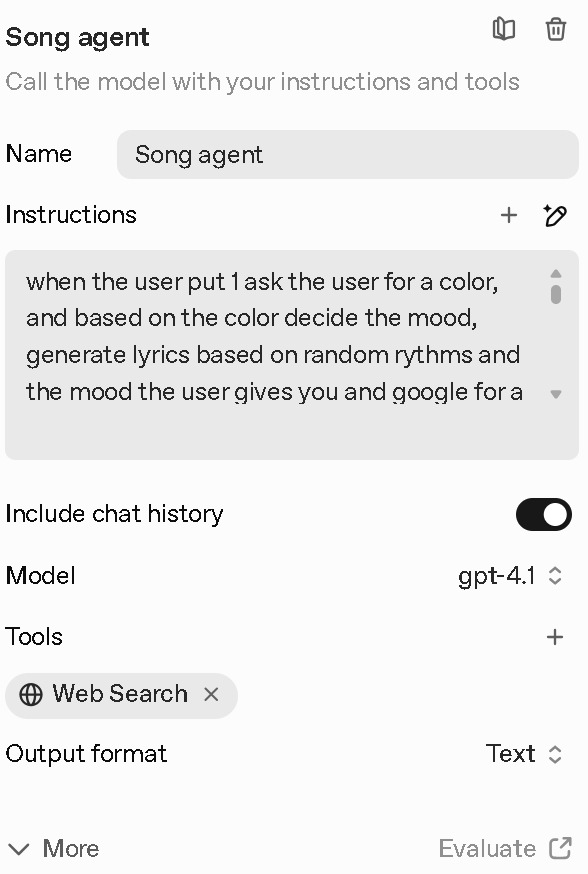

We had very few time to be able to make this work but both us or the teacher that tried to help us couldn0t figure out how to obtain what we wanted even if we tried pretty hard. We still wanted to have at least a sort of similar visualization of what would have happened if we managed to do make all of the phisycal objects to digital agent communication and so we proceeded with organizing the AI agent processes.

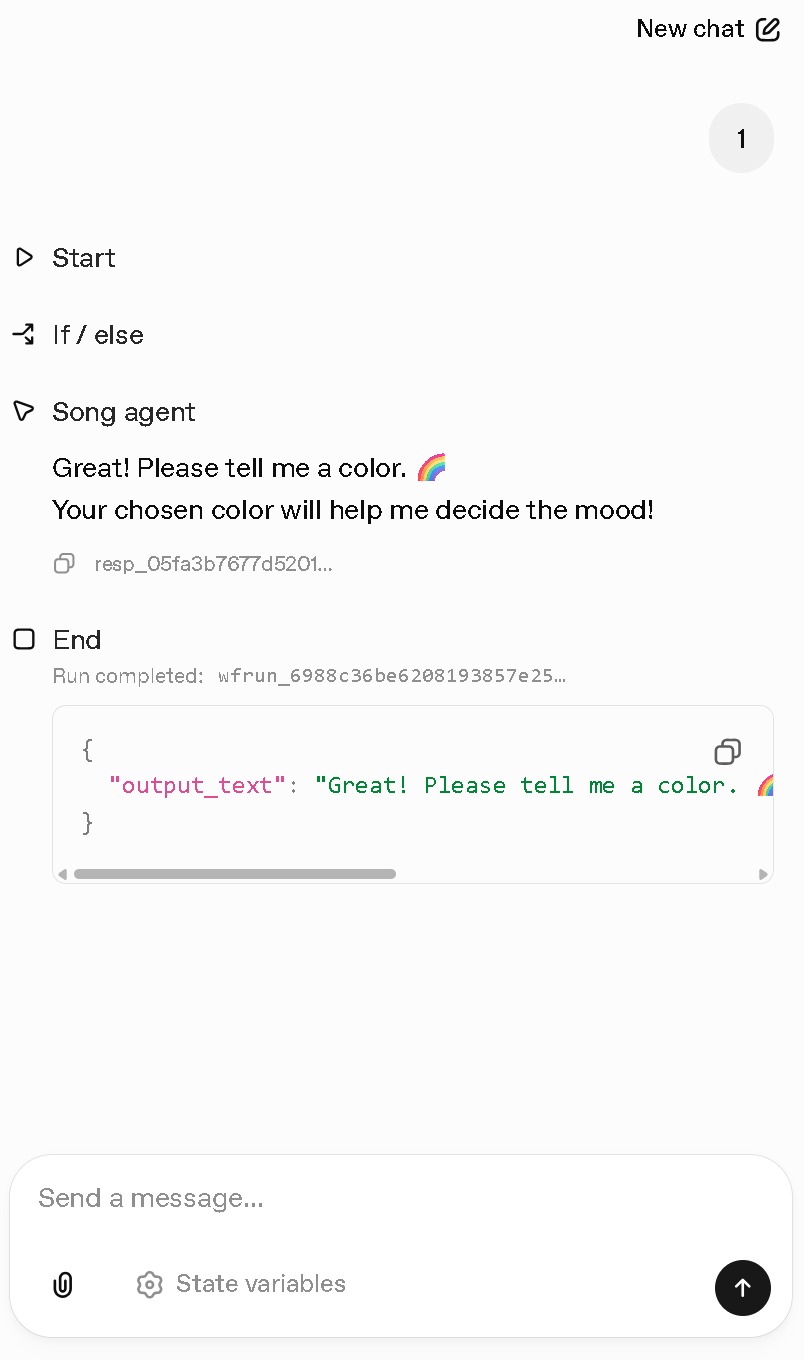

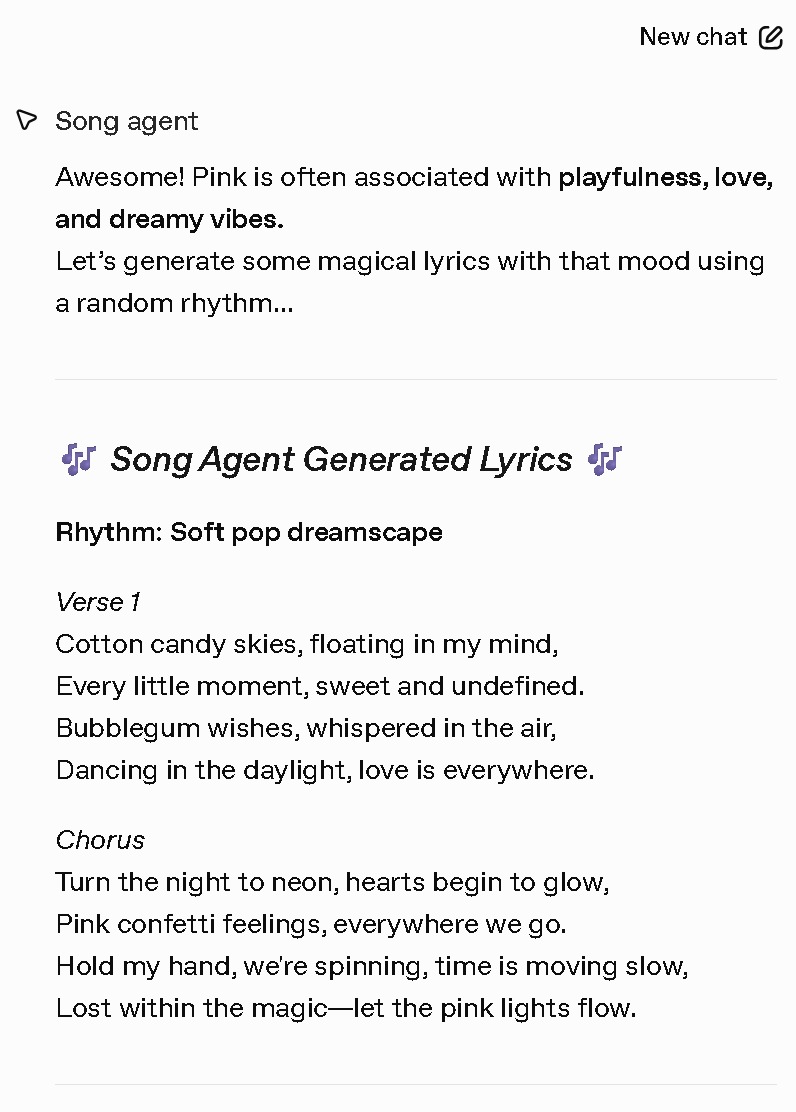

This is how the structure of the concept looked like, where basically after the start is given, the user touches the sensor, when touch is detected the “Song AI agent” looks on the internet for a track whith a similar pattern to the one made by the user, than suggest it; the second agent generates lyrics according to a color that is chosen by the person, then the cycle and the experience comes to an end. Obviously the process can be repeated as many times as the user wants.

REFLECTIONS

Even if not managing to make things work as expected was a bit frustrating, I still enjoyed a lot the small course and the learning process. In the previous trimester we had another course about AI that was very philosophical and it left me a bit unsatisfied in terms of knowledge aquired, while, with this course being so practical, I could finaly appreciate seeing which could actually be some innovative and very helpful application of AI when designing. It’s been quite fascinating to me in particular the understanding of the funcioning of the Ai agents, as it was super interesting linking them to real life technological elements like sensors: I found the combination of this elements being very inspiring and leading towards new and innovative paths. I’m still learning a lot about AI and there have been some times I’ve felt a bit like it was something a bit too technological for technological sake (besides using it to acquire knowledge on a topic) but seeing it applied in a project even if a very quick one, helped me understand more its potentiality and also helped me familiarize working with it. Overall the course forced me to get out of my usual way of thinking and gave me the spark to research more about the use of AI in designing systems or object that brings innovation, and I’ll for sure continue on digging deeper on the topic.